The DIG seminar takes place on a regular basis with both invited speakers and speakers from within the DIG team. Seminars from before September 2023 can be found here. This calendar contains future (and some past) seminars.

- Tuesday, June 17, 2025, 11:45, 4A301

Adrien Coulet (INRIA)

Data- and knowledge-driven approaches for step-by-step guidance to differential diagnosis

Diagnosis guidelines provide recommendations based on expert consensus that cover the majority of the population, but often overlook patients with uncommon conditions or multiple morbidities. We will present and compare two alternative approaches that provide a step-by-step guidance to the differential diagnosis of anemia and lupus. The first approach relies on reinforcement learning and observational data. The second on large langage models and domain knowledge.

- Tuesday, May 27, 2025, 11:45, 4A301

Maximilian Egger

Robust Knowledge Graph Cleaning

Data quality is needed to properly and reliably use the information represented in the dataset. The increasing volume of data renders data preparation and cleaning increasingly difficult. Additionally, more diverse types of data structures for databases, like graphs, get used and need to be handled differently. This leads to the necessity of robust methods to increase data integrity, scalable approaches for finding and fixing errors, and local-oriented algorithms that can be used to pinpoint attention where needed.

This talk provides an overview of my past, present, and future projects on knowledge graphs, exploring their potential for improving data cleanliness and robustness.

- Tuesday, May 13, 2025, 11:45, 4A301

Nikola Simidjievski

Synthesis & Augmentation of Tabular Data In the Age of Foundation Models

Foundation models – large pre-trained performant models – have shown remarkable success in applications that predominately focus on vision, language, and sound data. On the other hand, tabular data – one of the most prevalent data modalities in many critical domains of business, science, and healthcare – has seen limited benefits from these advances. Tabular data poses unique challenges that relate to heterogeneity, dimensionality, and scarcity as well as lack of explicit symmetries, implicit structures and incomplete prior knowledge — all of which have limiting effects on how we construct, train and apply/transfer large models for tabular data.

Data synthesis is one of the remedies for overcoming some of these challenges: It can help improve model performance in data-scarce but critical applications, but it can also be utilized as a data augmentation mechanism for training more robust models. Although previous research has sought to adapt the successes of generative modeling of homogeneous modalities to tabular modalities, defining an effective generator for tabular data remains an open problem. In this talk, I will present several novel data-centric approaches for data synthesis that focus on tabular data. Our key innovation is transforming recent pre-trained tabular classifiers into data generators and leveraging their learned information in the input and manifold space. These methods are fast, require no additional training, and can be applied to any downstream predictive model. They consistently improve performance, especially on small datasets where training well-performing models is hard. Consequently, we also uncover several properties and benefits that can help in the way how we design robust and performant general-purpose tabular foundation models.

- Tuesday, April 29, 2025, 11:45, 4A301

Simon Razniewski (TU Dresden)

GPTKB: Comprehensively Materializing Factual LLM Knowledge

LLMs have majorly advanced NLP and AI, and next to their ability to perform a wide range of procedural tasks, a major success factor is their internalized factual knowledge. Since (Petroni et al., 2019), analyzing this knowledge has gained attention. However, most approaches investigate one question at a time via modest-sized pre-defined samples, introducing an “availability bias” (Tversky and Kahneman, 1973) that prevents the discovery of knowledge (or beliefs) of LLMs beyond the experimenter’s predisposition. To address this challenge, we propose a novel methodology to comprehensively materialize an LLM’s factual knowledge through recursive querying and result consolidation. As a prototype, we employ GPT-4o-mini to construct GPTKB, a large-scale knowledge base (KB) comprising 101 million triples for over 2.9 million entities. This work marks a milestone in two areas: For LLM research, for the first time, it provides constructive insights into the scope and structure of LLMs’ knowledge (or beliefs), and its strengths and weaknesses. For KB construction, it pioneers new pathways for the long-standing challenge of general-domain KB construction. GPTKB is accessible at https://gptkb.org.

- Tuesday, April 8, 2025, 11:45, 4A125

Pratik Karmakar

ProvSQL: Provenance and Probabilistic Querying in Uncertain Databases

Probabilistic databases provide a powerful framework for managing and querying uncertain data, enabling principled reasoning under uncertainty. ProvSQL extends PostgreSQL to support provenance tracking and probability computation in probabilistic databases, leveraging provenance circuits to efficiently compute probabilities and Shapley-based data valuations. In this talk, we introduce ProvSQL, demonstrate its capabilities, and explore a key use case—content based image retrieval from the COCO dataset. We show how probabilistic query evaluation and data valuation techniques enhance explainability and trust in AI-driven decision-making.

- Tuesday, March 25, 2025, 11:45, 4A301

Gaël Varoquaux (INRIA)

Tabular foundation models: priors for numbers and strings

Deep-learning typically does not outperform tree-based models on tabular data. Often this may be explained by the small size of such datasets. For images, sound, text, the solution has be pretrained models, leading to foundation models, adapted and reused for many tasks. I will discuss the challenges to bring these ideas to tabular learning, and the progress that we have made, building priors for tables, ie columns of different natures, with numbers and strings.

- Tuesday, March 18, 2025, 11:45, 4A301

Pierre Monnin (INRIA)

Neuro-symbolic approaches for the knowledge graph lifecycle

In the Web of Data, an increasing number of knowledge graphs (KGs) are concurrently published, edited, and accessed by human and software agents. Their wide adoption makes essential the tasks of their lifecycle: construction, refinement (e.g., matching, link prediction), mining, and usage to support applications (e.g., explainable AI, recommender systems). However, all these tasks require facing the inherent heterogeneity of KGs, e.g., in terms of granularities, vocabularies, and completeness. Besides, scalability issues arise due to their increasing size and combinatorial nature. In my talk, I will present my research on neuro-symbolic approaches for the KG lifecycle, intertwining domain knowledge from ontologies, deductive reasoning, analogical reasoning, and machine learning models. Throughout my presentation, I will show that such approaches enhance models by improving their semantic awareness, frugality, and the semantic interpretability of their latent representation space.

- Tuesday, March 4, 2025, 11:45, 4A301

Ken Satoh (National Institute of Informatics, Japan)

Translating German traffic cases into logical rules

This is a joint work with May Myo Zin at my center and Georg Borgess at University of Saarland. In this talk, I will report the work on extracting normative sentences from German traffic cases and translating them into logical rules. The development of autonomous vehicles (AVs) requires a comprehensive understanding of both explicit and implicit traffic rules to ensure legal compliance and safety. While explicit traffic laws are well-defined in statutes and regulations, implicit rules derived from judicial interpretations and case law are more nuanced and challenging to extract. This research firstly investigates the potential of Large Language Models (LLMs), particularly GPT-4o, in automating the extraction of implicit traffic normative sentences from judicial decisions. Then we investigate how to translate these normative sentences into a logical form. We explore to use large language models (LLMs) to automate the translation of traffic rules into PROLOG, a declarative programming language ideal for encoding logical rules and relationships. The proposed methodology consists of three key phases: extracting traffic rules from diverse textual sources, structuring them into Logical English (LE) for clarity and consistency, and translating them into PROLOG representations using advanced natural language processing (NLP) techniques, including in-context learning and fine-tuning. The experimental results demonstrate the effectiveness of LLMs in automating this process, achieving high accuracy in translation.

- Tuesday, February 4, 2025, 11:45, 4A125

Fabian Suchanek

YAGO

In this talk I will present the newest version of YAGO, the knowledge base that we are building with several members of the DIG team. I will show why we build it, how we build it, and how it can be used. This will also be an occasion for me to get your feedback on our work.

- Tuesday, January 21, 2025, 11:45, 4A301

Simon Delarue

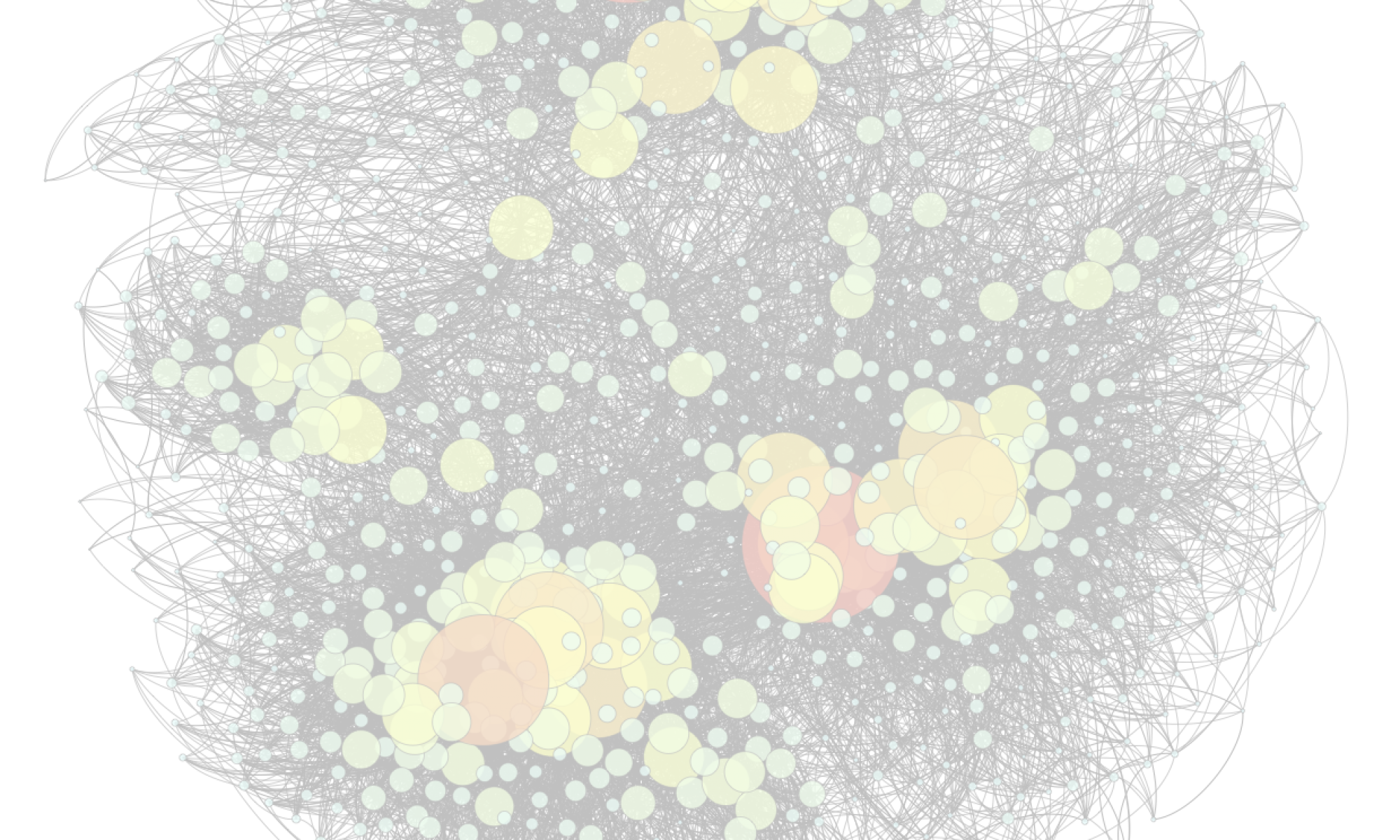

Learning on graphs: from algorithms to socio-technical analyses on AI

This thesis addresses the dual challenge of advancing Artificial Intelligence (AI) methods while critically assessing their societal impact. With AI technologies now embedded in high-stake decision sectors like healthcare and justice, their growing influence demands thorough examination, reflected in emerging international regulations such as the AI Act in Europe. To address these challenges, this work leverages attributed-graph based methods and advocates for a shift from performance-focused AI models to approaches that also prioritise scalability, simplicity, and explainability.

The first part of this thesis develops a toolkit of attributed graph-based methods and algorithms aimed at enhancing AI learning techniques. It includes a software contribution that leverages the sparsity of complex networks to reduce computational costs. Additionally, it introduces non-neural graph models for node classification and link predictions tasks, showing how these methods can outperform advanced neural networks while being more computationally efficient. Lastly, it presents a novel pattern mining algorithm that generates concise, human-readable summaries of large networks. Together, these contributions highlight the potential of these approaches to provide efficient and interpretable solutions to AI’s technical challenges.

The second part adopts an interdisciplinary approach to study AI as a socio-technical system. By framing AI as an ecosystem influenced by various stakeholders and societal concerns, it uses graph-based models to analyse interactions and tensions related to explainability, ethics, and environmental impact. A user study explores the influence of graph-based explanations on user perceptions of AI recommendations, while the building and analysis of a corpus of AI ethics charters and manifestos quantifies the roles of key actors in AI governance. A final study reveals that environmental concerns in AI are primarily framed technically, highlighting the need for a broader approach to the ecological implications of digitalisation.

- Tuesday, December 10, 2024, 11:45, 4A125

Lanfang Kong

Explainable algorithms for anomaly detection and time series forecasting

Artificial intelligence has shown dominant performance across diverse domains, including critical ones such as medicine, finance, justice and so on. As a result, the explainability of black-box models is becoming more and more important. We focus on two specific applications: anomaly detection and time series forecasting, and present XTREK and ADAPATCH, respectively.

XTREK is an unsupervised tree-based approach for explainable anomaly detection, which maximizes Kendall’s tau between the anomaly scores of the source anomaly detector and those of XTREK. The tree produced by our algorithm is relatively small in size, thereby boasting the renowned off-the-shelf transparency and explainability of tree-based approaches. Moreover, its explanations are sample-based. In particular, the anomaly scores are computed to be the inverse of the size of the corresponding leaf, thereby providing meaningful explanations when comparing examples with different anomaly scores. XTREK can also be used as an in-model approach, which is capable of providing concise explanations for its own decisions. Moreover, we propose efficient computation of Kendall’s tau coefficients when determining the best split at each node of the regression tree. We show how this can be computed incrementally, thereby making the running time of our algorithm almost linear (up to a logarithmic factor) in the size of the input.

ADAPATCH is an adaptive patch-based saliency map method for explainable time series forecasting, which provides local, post-hoc visualization explanations. The approach highlights those patches which would result in worse predictions when hidden to the black-box algorithm. With a differential encoding module in the mask of input, the optimization can be done by gradient-based perturbation. ADAPATCH does not need the patch parameters upfront, such as the length or the stride, as all patch-based approaches need. In fact, it learns those parameters from the data, thereby effectively adapting to different settings and application scenarios. By enforcing an upper bound on the maximum number of patches, we make sure that the patch-level explanations provided by our algorithm can be easily interpreted by humans, as opposed to explanations consisting of a large number of single time points. Moreover, ADAPATCH requires a much smaller number of parameters, typically linear in the number of patches as opposed to linear in the number of time steps. This makes our approach more efficient and easy to train.

Both methods are model-agnostic, which means the architecture of the black-box model can be hidden from the users. They provide accurate and simple explanations, as validated by extensive experiments.

- Tuesday, December 3, 2024, 11:45, 4A125

Gabriel Damay

Dynamic Decision Trees and Community-based Graph Embeddings: towards Interpretable Machine Learning

Machine learning is the field of computer science that interests in building models and solutions from data without knowing exactly the set of instructions internal to these models and solutions. This field has achieved great results but is now under scrutiny for the inability to understand or audit its models among other concerns. Interpretable Machine Learning addresses these concerns by building models that are inherently interpretable. This thesis contributes to Interpretable Machine Learning in two ways.

First, we study decision trees. This is a very popular group of machine learning methods for classification problems and it is interpretable by design. However, real world data is often dynamic, but few algorithms can maintain a decision tree when data can be both inserted and deleted from the training set. We propose a new algorithm called FuDyADT to solve this problem.

Second, when data are represented as graphs, a very common machine learning technique called “embedding” consists in projecting them onto a vectorial space. This kind of method however is usually not interpretable. We propose a new embedding algorithm called PaRFaITe based on the factorization of the Personalized PageRank matrix. This algorithm is designed to provide interpretable results.

We study both algorithms theoretically and experimentally. We show that FuDyADT is at least comparable to state-of-the-art algorithms in the usual setting, while also being able to handle unusual settings such as deletions of data. PaRFaITe on the other hand produces embedding dimensions that align with the communities of the graph, making the embedding interpretable.

- Tuesday, November 12, 2024, 11:45, 4A125

Cyril Chhun

Methodology and Meta-Evaluation Benchmark for Automatic Story Generation

Storytelling is a central component of human culture. Multiple approaches have been proposed to explore computational storytelling, despite the inherent challenges posed by the tasks of generating stories and assessing their quality. In this thesis, we design a meta-evaluation methodology and benchmark for ASG. First, we lay the groundwork for conducting our meta-evaluation: we describe our chosen setting, provide definitions for the ASG and Automatic Story Evaluation (ASE) tasks, and propose an original set of six criteria for story evaluation. Then, we introduce HANNA, our corpus of Human ANnotated NArratives, which contains 1,056 stories annotated w.r.t. our six criteria, and show that those criteria allow for a standardized human evaluation. We use Large Language Models (LLMs) to augment HANNA with 480 new stories and 150k+ rating annotations. We observe that LLMs obtain better grades than humans, as rated by selected LLMs. After that, we perform our meta-evaluation benchmark on HANNA. We mainly observe that specific measures for ASE are needed, and that commonly-used measures (e.g. BLEU) are sub-optimal. We then show our analysis of LLM performance at ASE: we find that LLMs are currently the best proxy for human evaluation of ASG and that, in our specific setting, providing detailed guidelines does not improve correlations between LLM and human ratings. Those results prompt us to study whether the performance displayed by LLMs at ASE and ASG can be explained through different factors. We perform a three-part study on LLM-generated explanations, and an analysis of pretraining data on LLM performance. Notably, we find that LLMs struggle to explain their answers with substantiated claims. Finally, we outline three main research perspectives: designing specific ASE measures, further investigating LLM performance at ASG and ASE, and assessing and mitigating the impact of LLMs on society.

References:

Of Human Criteria and Automatic Metrics: A Benchmark of the Evaluation of Story Generation (COLING 2022)

Do Language Models Enjoy Their Own Stories? Prompting Large Language Models for Automatic Story Evaluation (TACL 2024)

- Tuesday, October 29, 2024, 11:45, 4A125

Simon Coumes

Qiana: A First-Order Formalism to Quantify over Contexts and Formulas

Qiana is a logic framework for reasoning on formulas that are true only in specific contexts. In Qiana, it is possible to quantify over both formulas and contexts to express, e.g., that “everyone knows everything Alice says”. Qiana also permits paraconsistent logics within contexts, so that contexts can contain contradictions. Furthermore, Qiana is based on first-order logic, and is finitely axiomatizable, so that Qiana theories are compatible with pre-existing first-order logic theorem provers.

- Tuesday, October 15, 2024, 11:45, 4A301

Yael Amsterdamer & Daniel Deutch

Query-Guided Data Cleaning (Yael Amsterdamer)

We take an active approach to the cleaning of uncertain databases, by proposing a set of tools to guide the cleaning process. We start with a database whose tuple correctness is uncertain, and with some means of resolving this uncertainty, e.g., crowdsourcing, experts, a trained ML model or external sources. Guided by a query that defines what part of the data is of importance, our goal is to select tuples whose cleaning would effectively resolve uncertainty in query results. In other words, we develop a query-guided process for the resolution of uncertain data. Our approach combines techniques from different fields, including the use of provenance information to capture the propagation of errors to query results and Boolean interactive evaluation to decide which input tuples to clean based on their role in output derivation or effect on uncertainty.

Yael Amsterdamer is a Professor at the Department of Computer Science, Bar-Ilan University, and the head of the Data Management Lab. She received her Ph.D. in Computer Science from Tel-Aviv University, and has been a visiting Scholar at the University of Pennsylvania, Philadelphia, PA and jointly at Télécom Paris and INRIA institute (Paris, France). Her research is in the field of interactive data management spanning topics such as crowd-powered data management, interactive summarization and data cleaning. Her research was awarded multiple competitive grants including the Israeli Science Foundation (ISF) personal grants, the Israeli Ministry of Science (MOST) grant, and the BIU Center for Research in Applied Cryptography and Cyber Security Personal Grant.

Explanations in Data Science (Daniel Deutch)

Data Science involves complex processing over large-scale data for decision support, and much of this processing is done by black boxes such as Data Cleaning Modules, Database Management Systems, and Machine Learning modules. Decision support should be transparent but the combination of complex computation and large-scale data yields many challenges in this respect. Interpretability has been extensively studied in both the data management and in the machine learning communities, but the problem is far from being solved. I will present an holistic approach to the problem that is based on two facets, namely counterfactual explanations and attribution-based explanations. I will demonstrate the conceptual and computational challenges, as well as some main results we have achieved in this context.

Daniel Deutch is a Full Professor in the Computer Science Department of Tel Aviv University. Daniel has received his Ph.D. degree in Computer Science from Tel Aviv University. He was a postdoctoral fellow at the University of Pennsylvania and INRIA France. His research focuses on advanced database applications and web data management, studying both theoretical and practical aspects of issues such as data provenance, analysis of web applications and data, and dealing with data uncertainty. Daniel’s research has been disseminated by papers in the top conferences and journals on data and web data management (VLDB, SIGMOD/PODS, VLDBJ, TODS, etc.) He has won a number of research awards including the VLDB best paper award, the Krill Prize (awarded by the Wolf Foundation) and the Yahoo! Early Career Award. His research was awarded multiple competitive grants including the European Research Council (ERC) Personal Research Grant and grants by the Israeli Science Foundation (ISF, twice), the US-Israel Binational Science Foundation (BSF), the Broadcom Foundation, the Israeli Ministry of Science (MOST), the Blavatnik Interdisciplinary Cyber Research Institute (ICRC), Intuit and Intel.

- Tuesday, October 8, 2024, 11:45, 4A125

Rajaa El Hamdani & Yiwen Peng

Refining Wikidata Taxonomy using Large Language Models (Yiwen Peng)

Due to its collaborative nature, Wikidata is known to have a complex taxonomy, with recurrent issues like the ambiguity between instances and classes, the inaccuracy of some taxonomic paths, the presence of cycles, and the high level of redundancy across classes. Manual efforts to clean up this taxonomy are time-consuming and prone to errors or subjective decisions. We present WiKC, a new version of Wikidata taxonomy cleaned automatically using a combination of Large Language Models (LLMs) and graph mining techniques. Operations on the taxonomy, such as cutting links or merging classes, are performed with the help of zero-shot prompting on an open-source LLM. The quality of the refined taxonomy is evaluated from both intrinsic and extrinsic perspectives, on a task of entity typing for the latter, showing the practical interest of WiKC.

The Factuality of Large Language Models in the Legal Domain (Rajaa El Hamdani)

This paper investigates the factuality of large language models (LLMs) as knowledge bases in the legal domain, in a realistic usage scenario: we allow for acceptable variations in the answer, and let the model abstain from answering when uncertain. First, we design a dataset of diverse factual questions about case law and legislation. We then use the dataset to evaluate several LLMs under different evaluation methods, including exact, alias, and fuzzy matching. Our results show that the performance improves significantly under the alias and fuzzy matching methods. Further, we explore the impact of abstaining and in-context examples, finding that both strategies enhance precision. Finally, we demonstrate that additional pretraining on legal documents, as seen with SaulLM, further improves factual precision from 63% to 81%.

- Tuesday, September 24, 2024, 11:45, 4A125

Ambroise Odonnat

Leveraging Ensemble Diversity for Robust Self-Training in the Presence of Sample Selection Bias

Self-training is a well-known approach for semi-supervised learning. It consists of iteratively assigning pseudo-labels to unlabeled data for which the model is confident and treating them as labeled examples. For neural networks, softmax prediction probabilities are often used as a confidence measure, although they are known to be overconfident, even for wrong predictions. This phenomenon is particularly intensified in the presence of sample selection bias, i.e., when data labeling is subject to some constraints. To address this issue, we propose a novel confidence measure, called T-similarity, built upon the prediction diversity of an ensemble of linear classifiers. We provide the theoretical analysis of our approach by studying stationary points and describing the relationship between the diversity of the individual members and their performance. We empirically demonstrate the benefit of our confidence measure for three different pseudo-labeling policies on classification datasets of various data modalities.

- Tuesday, September 10, 2024, 11:45, 4A125

Samuel Reyd & Jean-Louis Dessalles

CIRCE: a Scalable Methodology for Causal Explanations in Cyber-Physical Systems (Samuel Reyd)

Cyber-physical systems (CPS) are increasingly complex and harder for human users to understand. Integrating explainability methods within their design is a key challenge for their acceptability and management. We consider that causal explanations can provide suitable answers to address this issue. Most approaches to causal explanations, however, rely on global system models, often built offline, which implies heavy computations, delays, and interpretability issues when answering questions at runtime. We propose CIRCE: a scalable method for Contextual, Interpretable and Reactive Causal Explanations in CPS. It is an abduction method that determines the cause of a fact questioned by users at runtime. Its originality lies in finding a cause instead of an entire causal graph to explain CPS behavior and employing a classic local Explanatory AI (XAI) technique, LIME, to approximate this cause. We validate our method via several simulations of smart home scenarios. Results indicate that CIRCE can provide relevant answers to diverse questions and scales well with the number of variables. Our approach may improve the efficiency and relevance of causality-based explanations for CPS and contribute to bridging the gap between CPS explainability and classic XAI techniques.

Simplicity bias in human-generated data (Jean-Louis Dessalles)

Texts available on the Web have been generated by human minds. We observe that simple patterns are over-represented: abcdef is more frequent than arfbxg and 1000 appears more often than 1282. We suggest that word frequency patterns can be predicted by cognitive models based on complexity minimization. Conversely, the observation of word frequencies offers an opportunity to infer particular cognitive mechanisms involved in their generation.

- Tuesday, July 9, 2024, 11:45, 4A125

Peter Fratrič

Mining behavior from a legal simulation environment: where we are and what lies ahead

This talk presents a methodological framework for the use of simulation-based methods to investigate questions of non-compliance in a legal context. Its aim is to generate observed or previously unobserved instances of non-compliance and use them to improve compliance and trust in a given socio-economic infrastructure. The framework consists of three components: a law formalization process resulting in a normative system implemented as an agent-based model, a profit-driven agent generating instances of non-compliance, and a norm extraction process transforming the generated behavior into a formal model. Early research results of practical implementation of this methodology are illustrated on a multinational tax avoidance case. Towards the end, we focus on open issues related to behavior clustering and data/process mining.

- Tuesday, July 2, 2024, 12:15, 4A301

Chadi Helwe

PhD defense practice talk

This thesis focuses on evaluating and improving the reasoning abilities of Smaller Language Models (SLMs) and Large Language Models (LLMs). It explores SLMs’ performance on complex tasks and their limitations with simpler ones. This thesis introduces LogiTorch, a Python library that facilitates the training of models on various reasoning tasks with minimal coding. It also presents TINA, a negated data augmentation technique that improves SLMs’ robustness to negation in textual entailment tasks. Further, this thesis explores LLMs’ capabilities through MAFALDA, a new benchmark for identifying and classifying reasoning fallacies, proposing a new annotation scheme and evaluation metric that considers subjectivity in reasoning. The findings indicate that humans outperform SLMs and LLMs in this reasoning task. We propose several research directions that merit further investigation, such as investigating Neuro-symbolic AI and improving the reasoning abilities of low-resource LLMs.

- Tuesday, June 18, 2024, 11:45, 4A125

Shady Elbassuoni

Data Centric Fake News Detection During Armed Conflicts

Armed conflicts continue to be a major global issue, causing widespread human suffering, displacement, and economic instability. Fake news can further fuel armed conflicts by manipulating public perception, inciting violence, and undermining efforts towards resolution. In this talk, I will argue why a one-size-fits-all approach for fake news detection is not adequate during armed conflicts. I will then present a data-centric approach for fake news detection, focusing on the Syrian civil war as a case study. The approach utilizes a knowledge graph of conflict casualties to construct a fake news dataset, and then employs meta-learning to automatically detect fake news. I will present experimental results that demonstrate the effectiveness of this approach compared to various baselines, and will conclude with a few potential avenues for future research.

- Tuesday, June 11, 2024, 12:30, 4A301

Agnieszka Ławrynowicz

Swift Linked Data Miner: Mining OWL 2 EL class expressions directly from online RDF datasets

The talk presents Swift Linked Data Miner, an interruptible algorithm that can directly mine an online Linked Data source (e.g., a SPARQL endpoint) for OWL 2 EL class expressions to extend an ontology with new axioms. The algorithm works by downloading only a small part of the Linked Data source at a time, building a smart index in the memory and swiftly iterating over the index to mine axioms. We propose a transformation function from mined axioms to RDF Data Shapes. We show, by means of a crowdsourcing experiment, that most of the axioms mined by Swift Linked Data Miner are correct and can be added to an ontology. We provide a ready to use Protégé plugin implementing the algorithm, to support ontology engineers in their daily modeling work.

Agnieszka Ławrynowicz is an Associate Professor at the Faculty of Computer Science and Telecommunications, Poznan University of Technology, and head of the Semantics and Knowledge Engineering Group. She is a member of the Scientific Council of the Polish Association for Artificial Intelligence, ECCAI, program and organizing committees of leading international conferences in the field of artificial intelligence and knowledge engineering (e.g. ISWC, K-CAP, EKAW, WWW, ECAI), chair of the Knowledge Engineering track at the conference of the Polish Association for Artificial Intelligence and member of the Editorial Committees of the journals Transactions on Graph Data and Knowledge and Semantic Web. She has led or participated in several research projects funded by the European Commission, Norwegian funds, the National Science Center, National Center for Research and Development, and as a member of the TAILOR European network of research laboratories on the topic of trustworthy artificial intelligence based on the integration of reasoning, learning, and optimization. She was a scholarship holder in the Marie-Curie program of the European Commission for a project on web mining at the University of Ulster, a winner of a grant in a program financed by the Foundation for Polish Science for a project in collaboration with Stanford University, a winner of an award for an outstanding monograph in computer science awarded by the Committee on Informatics of the Polish Academy of Sciences, a “Scientist of the Future” award, a promoter of the most innovative engineering thesis in Poland (competition under the auspices of the IEEE) and other awardees pursuing work in the field of artificial intelligence. She is an expert on ethics at the European Commission.

- Tuesday, May 28, 2024, 11:45, 4A125

Concept.AI

DIG team

From Wikipedia: “Concept is a deduction party board game released in 2013. The game was designed by Alain Rivollet and Gaëtan Beaujannot and published by Repos Production. It has collected multiple awards and nominations including the Jeu de l’Année prize in Cannes in 2014.”

What Wikipedia does not say is that a team of AI experts has been working on an AI system to solve Concept. This session of the DIG seminar will see the unveiling of their work.

- Tuesday, May 21, 2024, 11:45, 4A125

Surprise talks

DIG PhD students and emeritus professor

A series of talks about scientific topics, each containing a single mistake. The goal for the audience is to spot the mistake. Speakers get one point for each member of the audience who did not spot the mistake — but no points at all if no one found the mistake.

- Tuesday, March 26, 2024, 11:45, 4A125

Mehwish Alam

Deep Learning for Analyzing On-line Media Discourse

This talk will mainly discuss the results of my two related projects I secured as a senior researcher at Karlsruhe Institute of Technology, Germany. One of the two projects, funded by European Union under H2020 program, ITflows – IT Tools for Managing Migration Flows focused on providing predictions of migration flows to enhance humanitarian support. The second project, ReNewRS – Responsible News Recommender Systems (funded by Baden-Württemberg Stiftung), focuses on the main question “Do online news recommender systems promote social polarization or even radicalization?” This project investigated the influence of algorithmic news selection on shaping public opinion.

- Tuesday, February 13, 2024, 11:45, 4A125

Fabian Suchanek

Societal questions around large language models

I am trying to collect all societal issues that can come up in the context of large language models — from copyright to security and environmental problems. The talk will present what I found so far, and I will be happy to have your feedback. The talk is based on a lecture that I gave on the topic.

- Tuesday, January 30, 2024, 11:45, 4A125

Nils Holzenberger

The AI, Law and Philosophy workshop at JURIX 2023

On December 18, 2023, I attended the AI, Law and Philosophy workshop at the JURIX conference in Maastricht. This seminar is about the presentations I have attended and the people I have met. This will include a summary of the topics and main discussion points at the workshop, as well as the presentation of my own paper. I have informally discussed a variety of research topics with workshop participants, and will report some of them. I will conclude with the main highlights from this workshop.

- Tuesday, January 23, 2024, 11:45, 4A125

Mariam Barry

Adaptive Scalable Online Learning for Handling Heterogeneous Streaming Data in Large-Scale Banking Infrastructure

In this thesis, we have addressed different algorithmic and infrastructure challenges faced when dealing with online machine learning capabilities over high-volume data streams from heterogeneous sources. The research encompasses big data summarization, the construction of industrial knowledge graphs dynamically updated, online change detection, and the operationalization of streaming models in production. Initially, we introduced StreamFlow, an incremental algorithm and a system for big data summarization, generating feature vectors suitable for both batch and online machine learning tasks. These enriched features significantly enhance the performance of both time and accuracy for training batch and online machine-learning models. Subsequently, we proposed Stream2Graph, a stream-based solution facilitating the dynamic and incremental construction and updating of enterprise knowledge graphs. Experimental results indicated that leveraging graph features in conjunction with online learning notably enhances machine learning outcomes. Thirdly, we presented StreamChange, an explainable online change detection model designed for big data streaming, featuring constant space and time complexity. Real-world experiments demonstrated superior performance compared to state-of-the-art models, particularly in detecting both gradual and abrupt changes. Lastly, we demonstrated the operationalization of online machine learning in production, enabling horizontal scaling and incremental learning from streaming data in real-time. Experiments utilizing feature-evolving datasets with millions of dimensions validated the effectiveness of our MLOps pipelines. Our design ensures model versioning, monitoring, audibility, and reproducibility, affirming the efficiency of employing online learning models over batch methods in terms of both time and space complexity.

- Tuesday, December 19, 2023, 11:45, 4A125

Rajaa El Hamdani

Towards Zero-Shot Knowledge Base Construction with Pretrained Large Language Models

Joint work with Mehwish Alam, Thomas Bonald, Fragkiskos Malliaros

Knowledge bases are critical tools for structuring and understanding information, yet creating them from scratch is expensive and time-consuming.

This paper presents a methodology for Knowledge Base Construction (KBC) using Pretrained Large Language Models (PLLMs), particularly focusing on extracting structured data from natural language texts. Our objective is to evaluate the efficiency of PLLMs, specifically GPT-4, in a zero-shot learning setting for KBC within the legal domain, using Wikipedia articles as our primary data source. This approach is unique in its domain and text-agnostic nature, enabling scalable applications across various fields by simply extending the taxonomy.

Our initial findings show that while GPT-4 exhibits high F1 scores for some properties, it struggles with those requiring deep domain understanding. Interestingly, GPT-4 also surfaced verifiable facts not present in our ground truth, indicating its potential for uncovering novel information.

- Tuesday, December 12, 2023, 11:45, 4A125

Charbel-Raphael Segerie

An introduction to AI Safety

The rapid advancements in artificial intelligence is advancing quickly. While these technologies are awe-inspiring, models like ChatGPT or Bing Chat, although specifically developed to be polite and benevolent towards the user, can be easily manipulated.

In this presentation, we will address these major technical flaws. These models remain large black boxes and we cannot guarantee that their actions will conform to our expectations. A second flaw is the lack of robustness; the models are trained on a particular dataset and must therefore generalize to new situations during their deployment. The fact that Bing Chat threatens users when it was trained to help them illustrates this failure of generalization. The third flaw lies in the difficulty of specifying precisely to a model the desired objective, given the complexity and diversity of human values.

Then, we will address different solution paradigms: Specification techniques with Reinforcement Learning (RLHF and its variations), interpretability (how information is represented in neural networks, robustly editing a language model’s knowledge by modifying its memory, …), scalable oversight (training and alignment techniques that are likely to work even with human-level AIs).

- Tuesday, November 21, 2023, 11:45, 4A301

Simon Delarue and Thomas Bonald

Sparse Graph Neural Networks with Scikit-network (Simon Delarue)

Joint work with Thomas Bonald

In recent years, Graph Neural Networks (GNNs) have undergone rapid development and have become an essential tool for building representations of complex relational data. Large real-world graphs, characterised by sparsity in relations and features, necessitate dedicated tools that existing dense tensor-centred approaches cannot easily provide. To address this need, we introduce a GNNs module in Scikit-network, a Python package for graph analysis, leveraging sparse matrices for both graph structures and features. Our contribution enhances GNNs efficiency without requiring access to significant computational resources, unifies graph analysis algorithms and GNNs in the same framework, and prioritises user-friendliness.

A Consistent Diffusion-Based Algorithm for Semi-Supervised Graph Learning (Thomas Bonald)

Joint work with Nathan De Lara

The task of semi-supervised classification aims at assigning labels to all nodes of a graph based on the labels known for a few nodes, called the seeds. One of the most popular algorithms relies on the principle of heat diffusion, where the labels of the seeds are spread by thermo-conductance and the temperature of each node at equilibrium is used as a score function for each label. In this paper, we prove that this algorithm is not consistent unless the temperatures of the nodes at equilibrium are centered before scoring. This crucial step does not only make the algorithm provably consistent on a block model but brings significant performance gains on real graphs.

- Tuesday, September 26, 2023, 11:45, 4A101

Nedeljko Radulovic

Post-hoc Explainable AI for Black Box Models on Tabular Data

Current state-of-the-art Artificial Intelligence (AI) models have been proven to be very successful in solving various tasks, such as classification, regression, Natural Language Processing (NLP), and image processing. The resources that we have at our hands today allow us to train very complex AI models to solve problems in almost any field: medicine, finance, justice, transportation, forecast, etc. With the popularity and widespread use of the AI models, the need to ensure the trust in them also grew. Complex as they come today, these AI models are impossible to be interpreted and understood by humans. In this thesis, we focus on the specific area of research, namely Explainable Artificial Intelligence (xAI), that aims to provide the approaches to interpret the complex AI models and explain their decisions. We present two approaches STACI and BELLA which focus on classification and regression tasks, respectively, for tabular data.

Both methods are deterministic model-agnostic post-hoc approaches, which means that they can be applied to any black-box model after its creation. In this way, interpretability presents an added value without the need to compromise on black-box model’s performance. Our methods provide accurate, simple and general interpretations of both the whole black-box model and its individual predictions. We confirmed their high performance through extensive experiments and a user study.

- Tuesday, September 19, 2023, 11:45, 4A301

Julien Lie-Panis

Models of reputation-based cooperation. Bridging the Gap between Reciprocity and Signaling.

Human cooperation is often understood through the lens of reciprocity. In classic models, cooperation is sustained because it is reciprocal: individuals who bear costs to help others can then expect to be helped in return. Another framework is honest signal theory. According to this approach, cooperation can be sustained when helpers reveal information about themselves, which in turn affects receivers’ behavior. Here, we aim to bridge the gap between these two approaches, in order to better characterize human cooperation. We show how integrating both approaches can help explain the variability of human cooperation, its extent, and its limits.

In chapter 1, we introduce evolutionary game theory, and its application to human behavior.

In chapter 2, we show that cooperation with strangers can be understood as a signal of time preferences. In equilibrium, patient individuals cooperate more often, and individuals who reveal higher preference for the future inspire more trust. We show how our model can help explain the variability of cooperation and trust.

In chapter 3, we turn to the psychology of revenge. Revenge is often understood in terms of enforcing cooperation, or equivalently, deterring transgressions: vengeful individuals pay costs, which may be offset by the benefit of a vengeful reputation. Yet, revenge does not always seem designed for optimal deterrence. Our model reconciles the deterrent function of revenge with its apparent quirks, such as our propensity to overreact to minuscule transgressions, and to forgive dangerous behavior based on a lucky positive outcome.

In chapter 4, we turn to dysfunctional forms of cooperation and signaling. We posit that outrage can sometimes act as a second-order signal, demonstrating investment in another, first-order signal. We then show how outrage can lead to dishonest displays of commitment, and escalating costs.

In chapter 5, we extend the model in chapter 2 to include institutions. Institutions are often invoked as solutions to hard cooperation problems: they stabilize cooperation in contexts where reputation is insufficient. Yet, institutions are at the mercy of the very problem they are designed to solve. People must devote time and resources to create new rules and compensate institutional operatives. We show that institutions for hard cooperation problems can emerge nonetheless, as long as they rest on an easy cooperation problem. Our model shows how designing efficient institutions can allow humans to extend the scale of cooperation.

Finally, in chapter 6, we discuss the merits of mathematical modeling in the social sciences.

Seminars from before September 2023 can be found here.