The DIG team is part of Télécom Paris, a member of Institut Polytechnique de Paris, France. We work on knowledge graphs, language models, foundational models, learning over tabular data, graph representation learning, graph mining, and stream mining. The team develops methods for representing, integrating, and reasoning over complex, dynamic data to enable interpretable and trustworthy AI. Applications range from general-purpose AI to domain-specific areas such as healthcare and law.

News

Jun 02, 2026: Julia Stoyanovich's Talk on Uncertainty in the Data Engineering Pipeline

We were happy to host Julia Stoyanovich, director of the Center for Responsible AI at New York University, in the DIG seminar for her talk on Uncertainty in the Data Engineering Pipeline: From Imputation to Explanation.

Thank you for coming by, Julia!

Jun 02, 2026: Capucine Brillet Joins DIG as a PhD Student

We’re happy to welcome Capucine Brillet, who joins the DIG team as a new PhD student!

She will work on language models and knowledge bases, inspired by cognitive science. Capucine graduated with a Master’s in Mathematics and its Applications from Université Paris Cité, and joins us from ENS, where she worked on understanding representational collapse in neural networks and the brain.

Welcome to the team, Capucine!

May 19, 2026: Robert Wrembel's Talk on Data Integration

We were happy to host Robert Wrembel from Poznan University of Technology (PUT) for an inspiring talk on Data Integration: Remaining Challenges and Research Paths.

Abstract: Data integration (DI) has been a cornerstone of computer science research for decades, resulting in a few established reference architectures. They generally fall into three categories: virtual (federated and mediated), physical (data warehouse), and hybrid (data lake, data lakehouse, and data mesh). Regardless of the paradigm, these architectures depend on an integration layer, implemented by means of sophisticated software designed to orchestrate and execute DI processes. The integration layer is responsible for ingesting data from various sources (typically heterogeneous and distributed) and for homogenizing data into formats suitable for future processing and analysis. On the one hand, in all business domains, large volumes of highly heterogeneous data are produced, e.g., medical systems, smart cities, smart agriculture, which require further advancements in the data integration technologies. On the other hand, the widespread adoption of artificial intelligence (AI) solutions is now extending towards DI, offering alternative solutions, opening new research paths, and generating new open problems. Emerging paradigms, such as Data Spaces and the Model Context Protocol, further advance DI. This talk will then present (1) overview the research field of DI, (3) highlight remaining challenges, and (3) outline ML/AI solutions for DI. The findings presented in the talk are based on my experience in running research and development DI projects for various business entities.

Short Biography: Robert Wrembel (PhD, Dr. Habil.) is a professor in the Faculty of Computing and Telecommunications at Poznan University of Technology (PUT), Poland. He received his habilitation in 2008, specializing in database systems and data warehouses. His primary research includes data integration, data quality, databases, data warehouses, and data lakes. He held a few administrative roles at PUT, including two terms as deputy dean of the Faculty of Computing and Management (2008–2012) and the Faculty of Computing (2012–2016). Since Jan 2023, he has chaired the Data Processing Technologies research group at PUT.

May 18, 2026: Papers accepted to IJCAI 2026 demo track

Congratulations to our researchers on having two papers accepted at the demo track of IJCAI-ECAI 2026!

-

“LELA: An End-to-end LLM-based Entity Linking Framework with Zero-shot Domain Adaptation”. Samy Haffoudhi, Nikola Dobričić, Fabian Suchanek and Nils Holzenberger Link to the paper:https://suchanek.name/work/publications/ijcai-2026-lela.pdf

-

“Neuro-Symbolic Logical Reasoning with Textual Entailment”. Zacchary Sadeddine and Fabian M. Suchanek Link to the paper:https://suchanek.name/work/publications/ijcai-2026-vanessa.pdf

May 07, 2026: Paper accepted at ICML 2026

Congratulations to our researchers on having a paper accepted at ICML 2026!

- “Tailoring Strictly Proper Scoring Rules for Downstream Tasks: An Application to Causal Inference”. Roman Plaud, Alexandre Perez-Lebel, Antoine Saillenfest, Thomas Bonald, Marine Le Morvan, Gaël Varoquaux and Matthieu Labeau Link to the paper: https://hal.science/hal-05643379

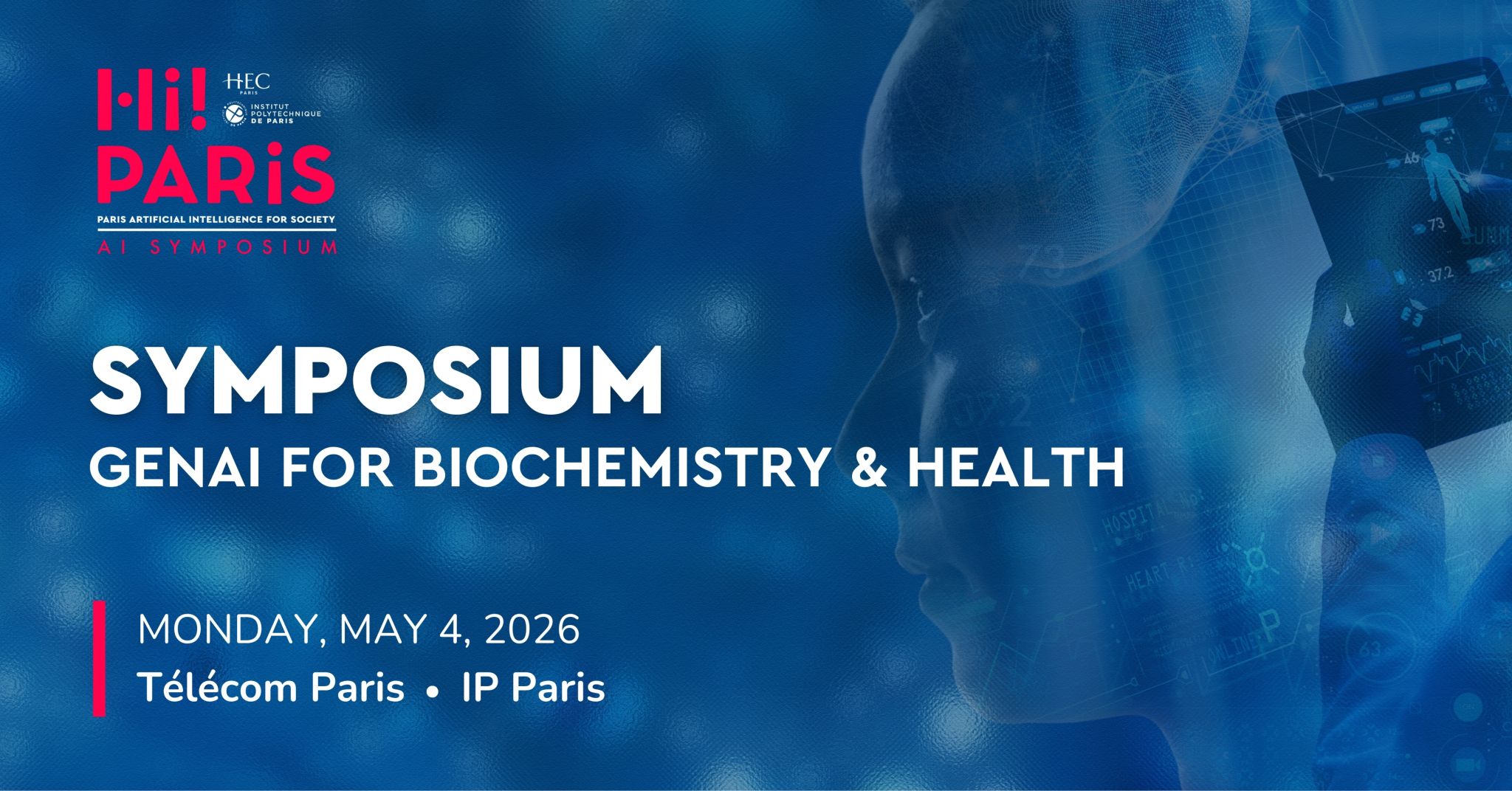

Apr 23, 2026: DIG Team Co-Organizes the HiParis Symposium 2026

We are excited to announce that the DIG team will co-organize the HiParis Symposium on Generative AI for Biochemistry and Health!

For more details, refer to the official event link: https://hi-paris.fr/research/symposium/

Apr 22, 2026: DIG Team Co-Organizes the AKBC Workshop at EMNLP 2026

We are excited to announce that the DIG team will co-organize the 10th workshop on Automated Knowledge Base Construction (AKBC 2026)! It will take place at EMNLP 2026 in Budapest, and accepts vision, regular, and challenge papers.

For more details, refer to the official Workshop Link: https://www.akbc.ws/2026/.

Apr 21, 2026: Elena Simperl Insightful Talk on Knowledge Engineering Assistants

We were happy to host Elena Simperl from King’s College London for an inspiring talk on Designing Better Knowledge Engineering Assistants.

Abstract: Knowledge engineering focuses on the creation and stewardship of knowledge‑based systems. As a field, it occupies a unique niche between software engineering, which involves crafting software that represents knowledge computationally, and AI, where software can reason upon knowledge representations to emulate human thought. Like many areas of knowledge work, the field is being reshaped by generative AI. This brings new opportunities to address long‑standing challenges of scale and inclusivity, while also raising concerns about accuracy, bias, and the responsible use of automation. In this talk, I will explore recent work in AI and human computer interaction that examines how to design better knowledge engineering assistants.

Biography: Elena Simperl is a Professor of Computer at King’s College London and the Director of Research for the Open Data Institute (ODI). She is a Fellow of the British Computer Society and the Royal Society of Arts, and a Hans Fischer Senior Fellow. Elena’s work is at the intersection between AI and social computing. She features in the top 100 most influential scholars in knowledge engineering of the last decade and in the Women in AI 2000 ranking. She is the president of the Semantic Web Sciences Association.

Apr 20, 2026: Mehwish Alam Successfully Defends Her Habilitation

Congratulations to Mehwish Alam for her successful Habilitation defense entitled “The Knowledge in Neurosymbolic Artificial Intelligence”!

Apr 01, 2026: Mohamed Islem KARA BERNOU Joining DIG as a PhD Student

Mohamed Islem KARA BERNOU is joining the DIG team for a PhD to work on verifying the outputs of language models with the help of knowledge bases.

Short Bio: Mohamed Islem KARA BERNOU is a machine learning researcher with experience working on LLMs, code optimization, and applied AI systems. He is a graduate of Université Paris Cité (M2 Machine Learning), with research experience at NYU Abu Dhabi and Rakuten Tech Europe, and publications at WWW25 and PACT25. His recent projects focused on LLM-guided compiler optimization and recommender systems.

Mohamed Islem KARA BERNOU will be advised by Yanzhu Guo and Fabian Suchanek, and is based in office 4C20.

Welcome, Mohamed Islem KARA BERNOU!

Mar 16, 2026: Welcome Chenwei Wan to the DIG Team

We’re happy to welcome Chenwei Wan to the DIG team as a research engineer! Chenwei will work on Non-named entity representation in knowledge bases, with the goal to start a thèse CIFRE with Schlumberger. Welcome, ChenWei!

Mar 09, 2026: Paper accepted at EACL 2026

Congratulations to Cristian Santini, Marieke van Erp, and Mehwish Alam for “It’s All About the Confidence: An Unsupervised Approach for Multilingual Historical Entity Linking using Large Language Models”

Feb 18, 2026: DIG has five articles accepted at ICLR 2026

-

TabStruct: Measuring Structural Fidelity of Tabular Data. Xiangjian Jiang, Nikola Simidjievski, Mateja Jamnik

-

Query-Level Uncertainty in Large Language Models. Lihu Chen, Fabian M. Suchanek, Gaël Varoquaux, Gerard de Melo

-

Efficient Resource Constrained Training of Vision Transformers via Subspace Optimization. Le-Trung Nguyen, Enzo Tartaglione, Van-Tam Nguyen

-

Study of Training Dynamics for Memory-Constrained Fine-tuning. Aël Quélennec, Nour Hezbri, Pavlo Mozharovskyi, Van-Tam Nguyen, Enzo Tartaglione

-

INSTANT: Compressing Gradients and Activations for Resource-Efficient Training. Tuan-Kiet Doan, Trung-Hieu Tran, Enzo Tartaglione, Nikola Simidjievski, Van-Tam Nguyen

Jan 26, 2026: IMT Pedagogy Prize honors free software course

Congratulations to Marc Jeanmougin and Théo Zimmermann for receiving the “Engagement, Pedagogy, and Teaching” award (emerging initiative category) from Institut Mines-Télécom (IMT) for their innovative course on open-source contributions. This program provides students with hands-on experience by having their code modifications integrated into real-world software projects. (News Source)

Dec 01, 2025: Yanzhu Guo joined DIG

We’re happy that Yanzhu Guo joined us as an assistant professor in the team! Welcome, Yanzhu!

Nov 01, 2025: Best Paper Award at ISWC 2025

Yiwen Peng, Thomas Bonald and Fabian Suchanek received the Best Paper Award at ISWC 2025 for their paper “FLORA: Unsupervised Knowledge Graph Alignment by Fuzzy Logic.”