Lanfang Kong

Explainable algorithms for anomaly detection and time series forecasting

Artificial intelligence has shown dominant performance across diverse domains, including critical ones such as medicine, finance, justice and so on. As a result, the explainability of black-box models is becoming more and more important. We focus on two specific applications: anomaly detection and time series forecasting, and present XTREK and ADAPATCH, respectively.

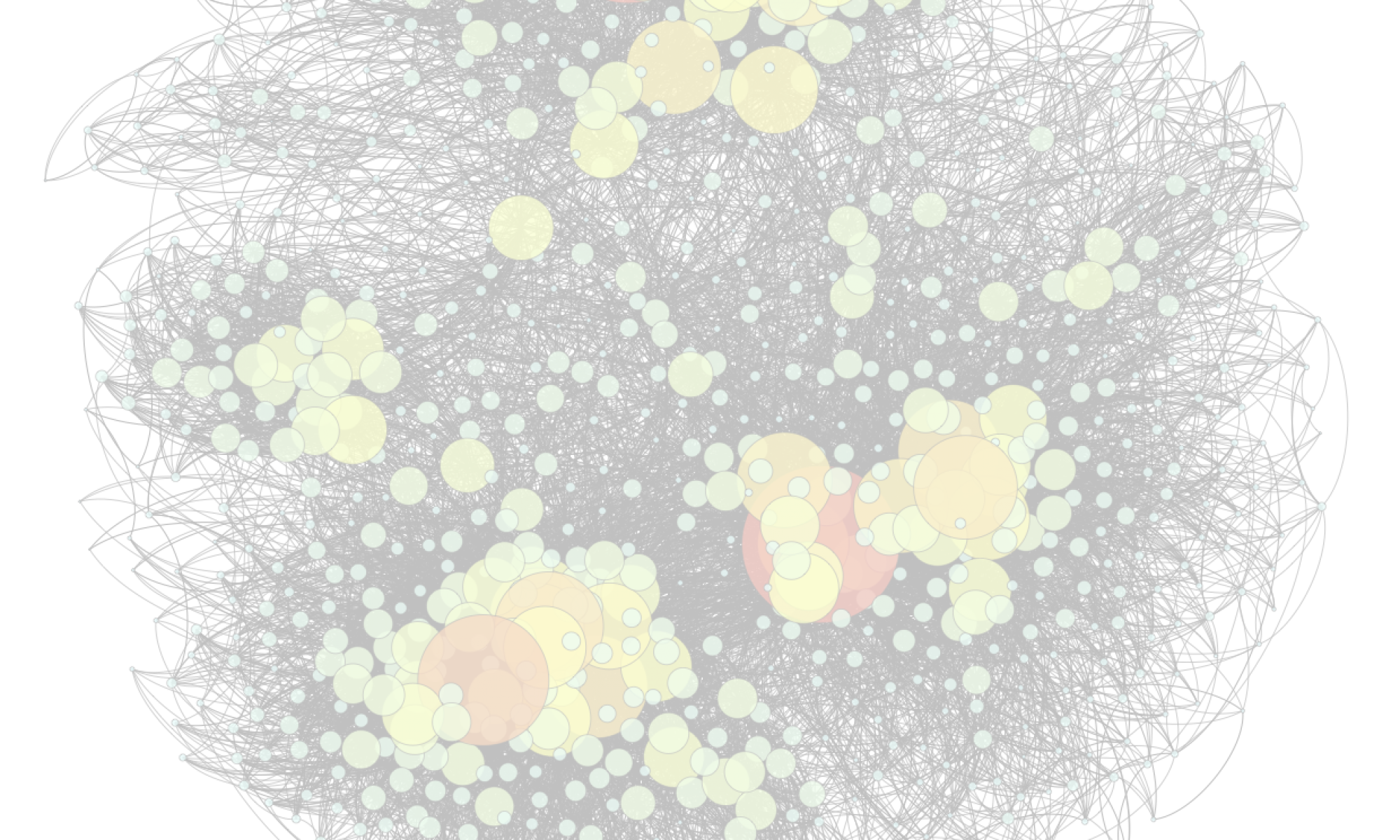

XTREK is an unsupervised tree-based approach for explainable anomaly detection, which maximizes Kendall’s tau between the anomaly scores of the source anomaly detector and those of XTREK. The tree produced by our algorithm is relatively small in size, thereby boasting the renowned off-the-shelf transparency and explainability of tree-based approaches. Moreover, its explanations are sample-based. In particular, the anomaly scores are computed to be the inverse of the size of the corresponding leaf, thereby providing meaningful explanations when comparing examples with different anomaly scores. XTREK can also be used as an in-model approach, which is capable of providing concise explanations for its own decisions. Moreover, we propose efficient computation of Kendall’s tau coefficients when determining the best split at each node of the regression tree. We show how this can be computed incrementally, thereby making the running time of our algorithm almost linear (up to a logarithmic factor) in the size of the input.

ADAPATCH is an adaptive patch-based saliency map method for explainable time series forecasting, which provides local, post-hoc visualization explanations. The approach highlights those patches which would result in worse predictions when hidden to the black-box algorithm. With a differential encoding module in the mask of input, the optimization can be done by gradient-based perturbation. ADAPATCH does not need the patch parameters upfront, such as the length or the stride, as all patch-based approaches need. In fact, it learns those parameters from the data, thereby effectively adapting to different settings and application scenarios. By enforcing an upper bound on the maximum number of patches, we make sure that the patch-level explanations provided by our algorithm can be easily interpreted by humans, as opposed to explanations consisting of a large number of single time points. Moreover, ADAPATCH requires a much smaller number of parameters, typically linear in the number of patches as opposed to linear in the number of time steps. This makes our approach more efficient and easy to train.

Both methods are model-agnostic, which means the architecture of the black-box model can be hidden from the users. They provide accurate and simple explanations, as validated by extensive experiments.